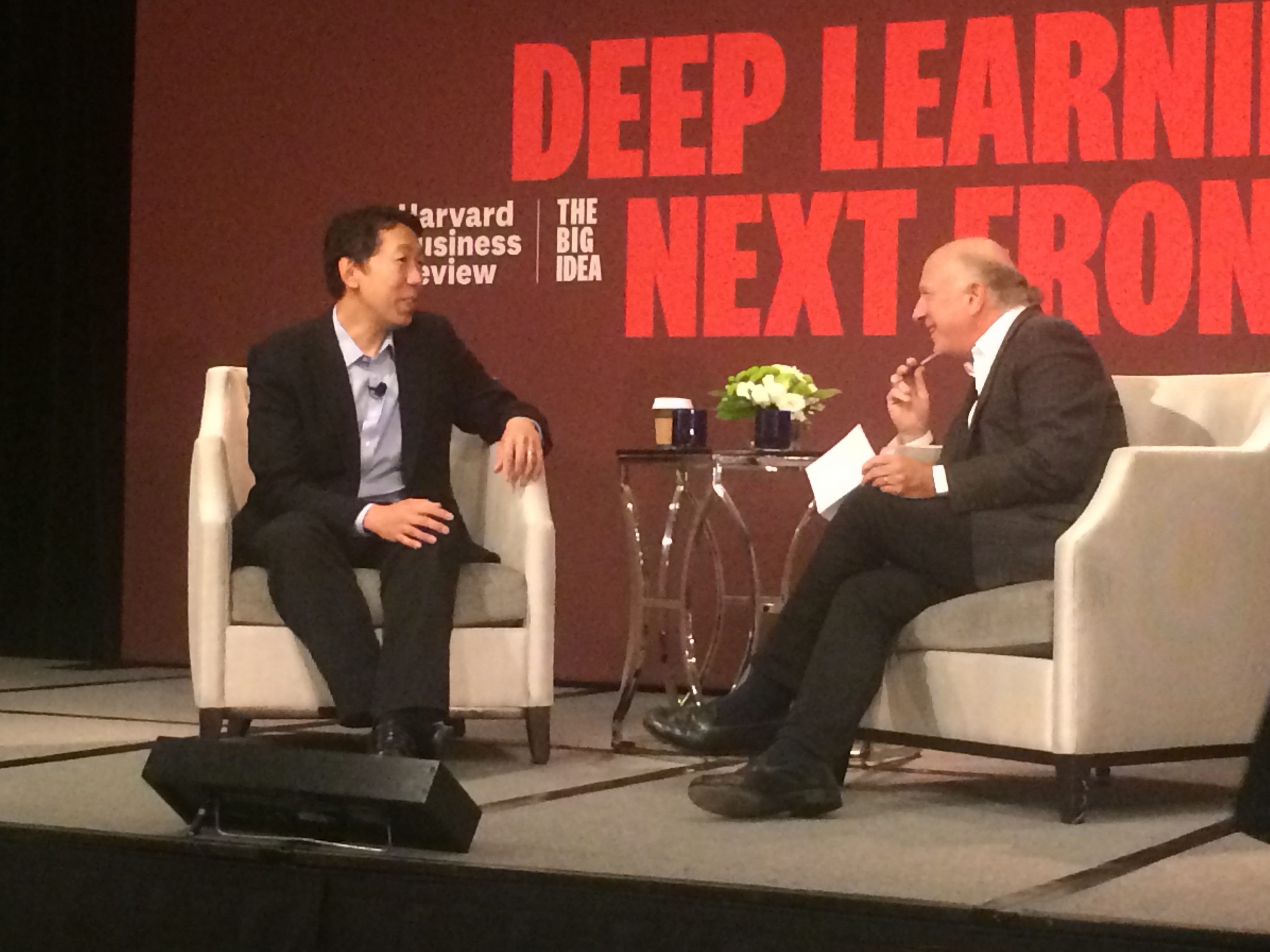

This morning I attended a talk hosted by the Harvard Business Review and sponsored by Google, entitled “Deep Learning’s Next Frontier.” The talk took the form of an interview with Andrew Ng, one of the global leaders in Artificial Intelligence and Machine Learning. I’m currently enrolled in his Massive Open Online Course (MOOC) course in Machine Learning – I mentioned previously that I have a passion in Artificial Intelligence – and it was good to see him live and up close.

AI is a leading topic in technology circles. And in the venture capital space, VC are investing a lot in companies developing AI–based solutions. While AI carries a promise of a different future, not all agree on whether that future will be good or bad.

Some science and technology leaders have engaged in a philosophical debate over the safety of AI–based solutions. In one corner is a group who emphasize the threat that AI poses to humanity. They assert that without proper controls, AI will run amok and lead to the downfall of humanity. I call this group the “glass half–empty” group. In the other corner are those who emphasize the benefits that AI offers. They assert that AI will lead to new opportunities, freeing humanity from mundane tasks to pursue more noble efforts. I call this group the “glass half–full” group.

Which group is right? Is the glass half empty or half full? To some extent the answer is: They both are, because the glass is at 50% capacity. That simply fact – that the glass is at 50% of capacity – is open to interpretation. It is that interpretation that lead some technologists to unnatural extremes.

Historically, transformative technologies have had the potential for – and have been used for – good or bad purposes. For example, consider nuclear power, where one might argue that it should be banned because it can result in extremely powerful and destructive weapons that could wipe out humanity (bad). Another could argue that radiation treatments are an important tool in saving the lives of patients by killing off the cancerous cells (good). One example is good, the other is bad. Is that right?

Consider a different argument, one where nuclear weapons that deter wars are useful (good) and that radiation therapy used incorrectly can kill more patients that it cures (bad). So, the answer is not binary. In many ways, AI is no different than the technologies that precede it.

I believe that AI is one tool of many in a computer or data scientist’s toolbox. When used properly, it can create a lot of benefit. For example, you can talk to an Amazon’s AI product – Alexa – to control your lights, order an Uber to take you to the airport, or order a refill of kitty litter. It might also be used to help your financial institution rebalance your portfolio or even decide if you are qualified for a loan.

In fact, we are now starting to see some useful and creating solutions that demonstrate the power of what I have termed “mobile AI.” One example is Seeing AI by Microsoft. You just point your camera at something, take a photo, and then the app describes it to you. That’s a pretty powerful capability for a relatively ubiquitous device – your smartphone.

While I’m not afraid – at least not yet – of AI running amok and killing humanity, I am afraid that some of our societal biases will permeate AI solutions and the decisions we delegate to AI products.

During today’s discussion, Dr. Ng mentioned that some AI solution have learned to be biased. In simply terms, this means that an AI product might make a different decision based on your gender, ethnicity, or even the the type of shirt you are wearing in a photo. He gave an example of a self–driving car that had to decide between a collision that will likely kill the driver or swerving onto the sidewalk killing or injuring pedestrians. This is an example I discussed in a previous post, but he takes this further by asking: What if the car makes the decision based on the ethnicity or gender or weight of the pedestrians?

These societal biases appear in AI solutions because they are present in the underlying data that we’ve used to train the AI. Researchers from Princeton have found that human prejudices appear in popular AI algorithms trained on text from the internet. This is supported by additional research recently reported in Science magazine. This is a real problem that businesses will face today and have a much great financial, reputational, or regulatory risk than the risk of AI running amok and destroying mankind. As scientists and technologists, we must be aware of places where biases might creep in, albeit inadvertently.

I’ll talk more about this the affect of societal bias on AI in an upcoming post or TWIST episode, because it is such an interesting topic with wide–ranging implications.

Returning to today’s talk, here are some of the key points and insights:

- Tools and techniques for approaching and using Artificial Intelligence and Machine Learning are getting better.

- AI is not a silver bullet that will solve a business’s program or challenge.

- AI has the potential to touch everyone’s life.

- A lot of the “fear” that people share about AI are still in the realm of Science Fiction.

- Companies need to have an AI strategy in a same way that they have Internet and mobile strategies.

- AI will be disruptive to many industries. For example, self–driving vehicles could displace truck and local taxi drivers.

- Beware of the hype and overpromises.

- AI will create new opportunities for those ready to take advantage of what it offers

I’m optimistic about AI and its potential. And I don’t think we’ve seen the AI “killer app” (figuratively, not literally) yet. AI is an exciting frontier for anyone interested in science, technology, or just thinking about a future where computers can simply do more.

––––

Steven B. Bryant is a researcher and author who investigates the innovative application and strategic implications of science and technology on society and business. He holds a Master of Science in Computer Science from the Georgia Institute of Technology where he specialized in machine learning and interactive intelligence. He also holds an MBA from the University of San Diego. He is the author of DISRUPTIVE: Rewriting the rules of physics, which is a thought–provoking book that shows where relativity fails and introduces Modern Mechanics, a unified model of motion that fundamentally changes how we view modern physics. DISRUPTIVE is available at Amazon.com, BarnesAndNoble.com, and other booksellers!

Steven B. Bryant is a researcher and author who investigates the innovative application and strategic implications of science and technology on society and business. He holds a Master of Science in Computer Science from the Georgia Institute of Technology where he specialized in machine learning and interactive intelligence. He also holds an MBA from the University of San Diego. He is the author of DISRUPTIVE: Rewriting the rules of physics, which is a thought–provoking book that shows where relativity fails and introduces Modern Mechanics, a unified model of motion that fundamentally changes how we view modern physics. DISRUPTIVE is available at Amazon.com, BarnesAndNoble.com, and other booksellers!

Images courtesy of Pixabay.com

Photo of Steven B. Bryant ©2015 Steven B. Bryant, Photo by Amy Slutak